When Magic Becomes Infrastructure: The Biggest AI Shift You Didn't See Coming

The Biggest Shift in AI Nobody Is Talking About Loudly

Consumer chatbots have had their time in the spotlight. Now all eyes turn toward something far bigger: heavy infrastructure for autonomous operation. During the final week of April 2026, Anthropic, OpenAI, Google, Mistral, and Manus all released internal documents revealing a shared strategy — transforming AI from entertainment into critical enterprise infrastructure.

This is not hype. These are the blueprints of the next industrial revolution.

When Magic Becomes Infrastructure

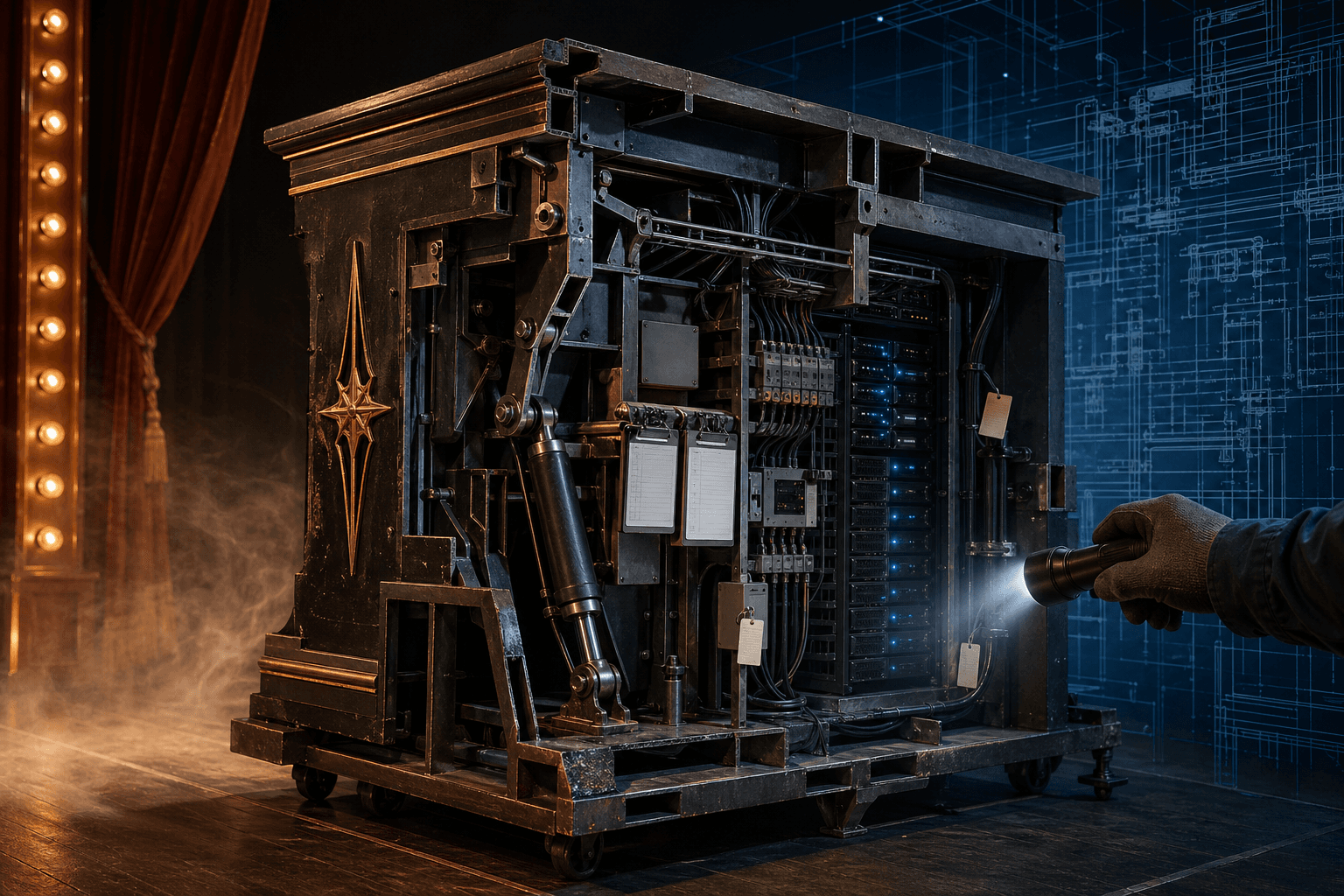

When you first watch a magician saw someone in half, it's pure magic — smoke, mirrors, dramatic music. You're not thinking about the hidden hinges on the box. You just want to see the trick.

But imagine that same magician is suddenly hired to build the structural steel supports for a 70-story skyscraper. You don't want smoke and mirrors anymore. You want absolute boring reliability. You want to inspect the blueprints, verify the welds. The magic has to become infrastructure.

That exact transition is happening in the AI industry right now. Consumer magic is taking a back seat — replaced by heavy-duty enterprise plumbing. Here's what we learned from internal sources during the final week of April 2026.

🔍 Anthropic — Transparency as Currency

Anthropic recently published an unusually honest technical postmortem on their coding agent, Claude Code. Instead of burying their heads in the sand, they openly admitted the agent had suffered significant quality degradation.

Three specific bugs were laid bare:

- Rushed reasoning: They had forced the agent to "medium" reasoning effort to save latency — essentially telling the AI to rush its homework

- The amnesia bug: A context clearing feature malfunctioned and wiped the agent's memory on every single conversational turn

- The brevity rule: They capped output to 25 words between tool calls — which choked off the model's working memory

Anthropic rolled all changes back. And their next move was even more interesting — they shipped updated managed agent memory into public beta.

What does the 100 KB limit mean?

Each memory item is hard-capped at 100 KB. This forces developers to architect modular file systems instead of dumping the entire codebase into context — like indexing a library instead of trying to memorize every book.

But with read-write memory comes prompt injection risks. If an untrusted user feeds malicious code and the agent saves it into memory, that instruction is now persistent. It will be recalled on future tasks, even for other users. Anthropic is now forcing developers to sanitize everything committed to long-term memory.

🧠 OpenAI — Hardening the Nervous System

OpenAI is burying their most powerful models deep into the plumbing. GPT 5.5 and GPT 5.5 Pro have officially moved into the API — with a massive one million token context window.

The difference between chat interfaces and APIs is enormous:

- A model isolated in a consumer chat is like a race car engine on a dyno stand — you can't build automated business processes around it

- When it moves to an API with batch processing, developers can programmatically route 10,000 customer service logs to it at 3 AM

OpenAI also updated GPT 5.5's system card specifically for API safeguards. If an AI hallucinates in a chat window, it's mildly annoying. Via an API directly connected to your production database, it's catastrophic.

Unix Socket transport is the key here. Think of it like passing a folded note under the table to someone in the same room — it never touches the public internet. It's lightning fast and extremely secure, perfect for backend systems where the AI agent and database live on the same physical server.

Combined with "sticky environments" — where the network connection maintains the AI's working state through interruptions — developers get predictability like never before. Codex now also reports reasoning token usage natively in JSON output, so developers can track exactly how much money the AI burns while it thinks before it acts.

🏗️ Google — Security Gates in the Back, Party in the Front

Google is playing both sides right now. They released the Gemini Drop — a heavily consumer-facing experience with native Mac access, persistent project notebooks, and "personal intelligence" that deeply integrates into your daily life.

But on the developer side, it's an entirely different vibe. Secure environment loading and strictly enforced workspace trust in headless modes.

Headless mode explained: An AI agent program running without a graphical interface — no operator clicking "approve." If a malicious script sneaks into the workspace, the AI might blindly execute it. Google's new trust enforcement actively pauses the agent and requires cryptographic verification of the workspace directory before a single command is allowed.

Interestingly, "personal intelligence" is actively excluded in the European Economic Area, UK, and Australia. This isn't a technical failure — it's regulatory guardrails keeping the product viable. GDPR makes that kind of ambient data collection an absolute legal minefield.

🔑 Mistral — The Automated Valet Key

If you lock down an AI agent so tightly that it can't access anything without a human supervisor, how do you get it to do real work? The authentication bottleneck is arguably the single biggest friction point in enterprise automation right now.

The traditional OAuth protocol is like a temporary valet key: Every time the agent tries to access a tool, it's interrupted — "excuse me, I need the key again." It breaks the entire concept of autonomy.

Mistral's new Python SDK introduced a workflow surface with a helper function called "Execute with Connector Rothesync" — despite the incredibly clunky name, what it actually does is pure elegance. When a long-running workflow hits a tool requiring fresh authentication, it gracefully pauses, handles login callback in the background, grabs the new access token, and automatically resumes the agent exactly where it left off.

They also expanded prompt cache keys — saving massive compute costs when an agent analyzes thousands of documents against the same rule set.

🚀 Manus — From Task API to Deployment Platform

Manus is undergoing a fascinating evolution — transitioning from a simple task API into a highly opinionated deployment platform.

New endpoints:

- Website publishing — the agent can build a site structure that developers can programmatically publish

- Asynchronous deployment — you must poll the status endpoint to verify when the rollout is complete

- Latest checkpoint only — you can never roll back to a previous version via the API

The wildest detail: There is no public unpublish endpoint. If you want to take a site down entirely, you cannot do it via the API — you must email their support team.

Why? Programmatic teardown is incredibly dangerous. If an autonomous agent can unpublish a website, a slight hallucination could delete your entire production corporate site at 2 AM. Manus builds safety by requiring human oversight for the most destructive actions.

Manus also instituted strict shared rate limits — $10 per minute for task creation. This isn't consumer pricing; it's industrial scale protection. They don't want hobbyists spamming the system and degrading performance for enterprise clients who rely on predictable behavior.

🔮 What Does It All Mean?

We've traced the entire enterprise AI lifecycle:

- Anthropic is building transparent, manageable memory — proving radical honesty about bugs actually builds developer trust

- OpenAI is hardening the nervous system — moving their most capable models into the API and locking down local server communication

- Google is fencing in the developer backyard with strict trust verification while navigating complex global data laws

- Mistral acts as the automated valet — smoothing out painful permission bottlenecks so workflows don't constantly crash

- Manus is turning agents into full-stack deployable webmasters with strict safety constraints

Thoughts on How This Affects the Future

The magic show is officially over and the hard hats are on. The AI industry is really growing up.

The foundational layer is here. We're finally moving toward a reality where AI agents can reliably execute complex multi-step tasks over long time horizons without requiring constant human babysitting.

When agents inevitably start negotiating rate limits, access keys, and complex security protocols directly with each other's agents — completely in the background — the defining question emerges:

When the systems we built start writing their own blueprints, who is inspecting the welds?

That is the defining question of the next era of computing.

Sign up for our newsletter to get deep dives like this delivered straight to your inbox — so you don't miss the next shift before everyone else does.

The Forge newsletter

Get new articles in your inbox

Pick the topics you care about. No noise, at most one email a week.

We follow GDPR. Unsubscribe anytime.