Claude is moving into everyday workflows

When Claude no longer only answers inside a chat window, but can connect to documents, spreadsheets, design tools, and business data, the question changes for small teams: not “which model is best?”, but “which workflow are we ready to let AI support first?”. Today's Claude signal is less about another Claude Code button and more about the practical move from chat to governed, reviewable workflows.

Today's signal: connectors, MCP, and ready-made agent patterns

Anthropic has shown a clear direction in recent days: Claude should work closer to the tools where work already happens. The Claude Connectors page describes a directory where connectors can be filtered by whether they work with Claude, Claude Code, Skills, industry, and whether they have read or write access. A connector is an approved link between Claude and an external tool or data source.

Source: Claude Connectors

MCP, Model Context Protocol, is the open standard Anthropic created so AI apps can connect to tools and data sources in a more standardized way. For non-technical teams, the important point is simple: MCP makes integrations more repeatable, but it does not replace permissions, test data, or human approvals.

Source: Model Context Protocol in Claude documentation

Anthropic also shows examples from financial services where ready-made agent templates package instructions, connectors, and subagents for tasks such as pitch materials, KYC review, general ledger reconciliation, and month-end close. An agentic workflow here means Claude can plan multiple steps, use tools, and hand results over for review; it does not mean the human disappears from the process.

Source: Anthropic: Agents for financial services

Why this matters for small Swedish teams

For Hammer Automation's audience, this is not only enterprise news. It is a useful template for how smaller organizations should think before connecting AI to real systems.

It especially matters for:

- Owners and solo operators who want less repetitive administration but do not have an internal developer team.

- Schools and educators who want staff to test AI support without mixing in sensitive student data too early.

- Small finance, sales, and support teams where much of the work happens in documents, email, spreadsheets, and lightweight systems.

- Nordic organizations with EU requirements where permission, traceability, and human control matter as much as time savings.

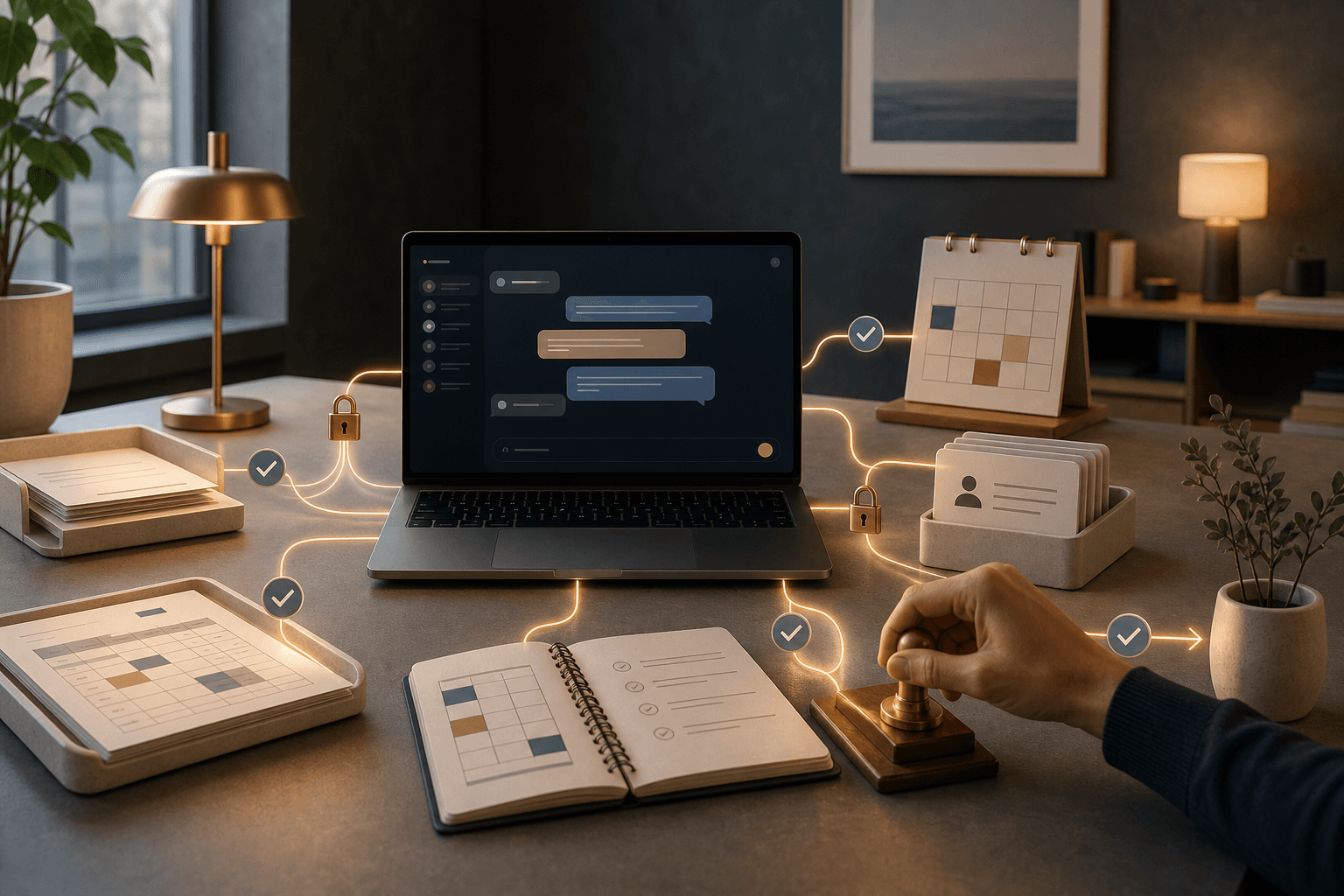

The practical lesson: do not start with “connect everything”. Start with a bounded workflow where Claude may read, structure, and suggest, while a human approves before anything is sent, posted, booked, or published.

What you can test today

Choose a workflow that is already boring but not business-critical. Examples:

- Summarize the week's customer questions from anonymized notes.

- Create a first meeting agenda draft from previous decisions.

- Sort open quote requests by next step.

- Compare two policy drafts and mark differences.

Use Claude desktop or Claude in the browser to map the process first. If the workflow later connects to systems through MCP, Claude Connectors, or Claude Code, the next step should be a small Tool Forge review: which data sources are needed, which should be read-only, which may be changed, and where approval must happen.

Where human control belongs

The easiest mistake is to treat a connector as a shortcut around process design. Do the opposite. Decide first where Claude may:

- Read: for example documents, calendar entries, ticket lists, or anonymized extracts.

- Suggest: for example replies, priorities, summaries, and control questions.

- Prepare: for example drafts, lists, spreadsheet structures, or work orders.

- Never do without approval: send customer email, change records, post accounting entries, delete, publish, or make decisions about people.

This is where Mindset Forge is often the first step: help the team agree on the rules before tools are connected.

Try this prompt this week

Use this prompt in Claude desktop or Claude in the browser. Do not paste sensitive personal data, customer data, or student data. Describe the workflow with sample data or anonymized fields.

You are my AI implementation coach. Help me map a small workflow where Claude can support our team without taking over decisions.

Workflow: [describe the process, for example weekly customer issue summary, meeting agenda, quote sorting, or policy review]

Team: [roles and approximate size]

Tools we use: [email, calendar, spreadsheet, CRM, LMS, documents, ticketing system]

Sensitive data that must NOT be shared: [list]

Give me:

1. A safe first test that only uses anonymized or fictional data.

2. Which data sources Claude would need to read if we later use a connector or MCP.

3. Which steps Claude may suggest but not perform by itself.

4. At least five human approval points.

5. A simple checklist for when the test is ready to move from experiment to everyday routine.

6. Three risks that should make us stop or limit the automation.

Answer practically and briefly, with a small Swedish team in mind and no internal AI developer.

A good result looks like this:

- You get a test that can run without real customer or student data.

- Claude separates reading, suggestions, and actions.

- There are clear stop points where a human reviews the work.

- The next step is small enough to test in one afternoon.

What we are watching next

Claude Code 2.1.138 landed in the npm registry on May 9 with internal fixes, following 2.1.137 where VS Code activation on Windows was fixed. That is a stability signal rather than a major product announcement. Together with the connector directory, the MCP roadmap, and Anthropic's examples of ready-made agent templates, it points to the same everyday question: how do we build AI workflows that are useful, understandable, and safe enough for small teams?

Sources: Claude Code changelog, npm registry for @anthropic-ai/claude-code, MCP Roadmap 2026